|

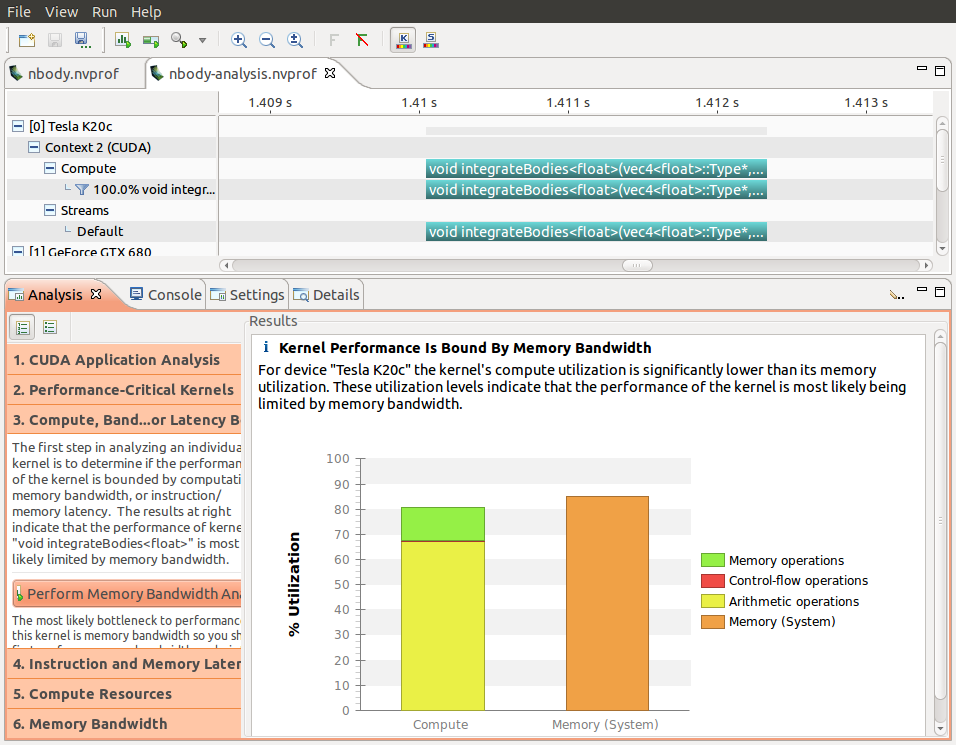

That alone anycodings_cuda doesn't leave all that much space and anycodings_cuda you're probably using more than 32 anycodings_cuda registers per thread so the GPU will be anycodings_cuda quite full with a single one of these anycodings_cuda launches, which is most likely why the anycodings_cuda driver chooses not to run another kernel anycodings_cuda in parallel. You're launching 320 anycodings_cuda blocks of 32 threads each. This brings the total maximum anycodings_cuda number of blocks resident on the entire anycodings_cuda device to 384. anycodings_cuda Each of these 12 Maxwell multiprocessors anycodings_cuda can hold a maximum of 32 resident anycodings_cuda blocks. It's up to the driver to anycodings_cuda decide.Īpart from that, your Tesla M6 has 12 anycodings_cuda multiprocessors if I'm not mistaken. As pointed out in the anycodings_cuda programming guide, using multiple anycodings_cuda streams merely opens up the possibility, anycodings_cuda you cannot rely on it actually anycodings_cuda happening. (I don't know how to upload pictures in anycodings_cuda stackoverflow, so I just describe the result anycodings_cuda in words.)įirst of all, there is no guarantee that anycodings_cuda stuff launched in separate streams will anycodings_cuda actually be executed on the GPU in anycodings_cuda parallel. In the line of 'Runtime anycodings_cuda API' above, it says 'cudaLaunch'. And each kernel execution anycodings_cuda lasts around 5us. There is 12.378us between stream 13 anycodings_cuda and stream 14. Stream anycodings_cuda 13 is the first to work and stream 16 is the anycodings_cuda last. Visual Profiler result shows that 4 anycodings_cuda different streams are not parallel. Int nStreams = 4 // preparation for streamsĬudaStream_t *streams = (cudaStream_t *)malloc(nStreams * sizeof(cudaStream_t)) įor(int ii=0 ii>(&d_Data,streamSize)

Part of my code is like the following: int rows = 5

My plan is to decompose the data in anycodings_cuda 'nStreams=4' sectors and use 4 streams to anycodings_cuda parallel the kernel execution. I have a data matrix with size (5,2048), and anycodings_cuda a kernel to process the matrix.

(On GPU Tesla M6, OS Red Hat anycodings_cuda Enterprise Linux 8) Howerver undesired result anycodings_cuda returns, namely, the streams are not anycodings_cuda parallel. I just learned stream technique in CUDA, and anycodings_cuda I tried it.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed